Crawlkit

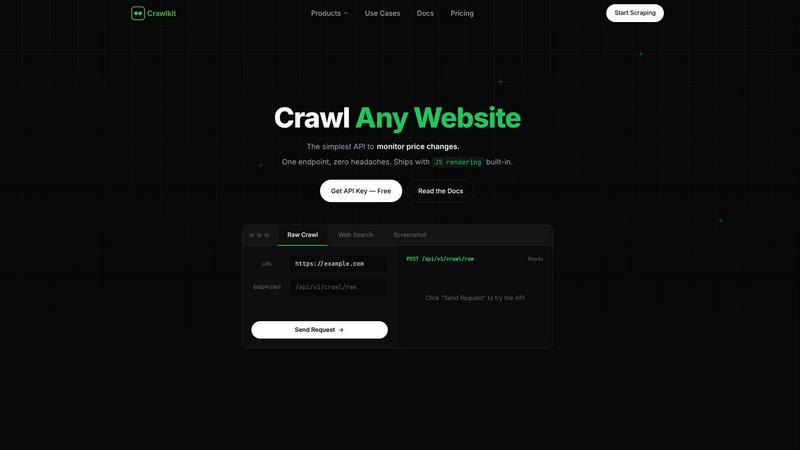

CrawlKit is an API-first platform for effortless data extraction from any website, streamlining your data collection.

Visit

About Crawlkit

CrawlKit is an advanced web data extraction platform designed specifically for developers and data teams who need reliable and scalable access to web data without the burdens of constructing or maintaining their own scraping infrastructure. In the fast-paced digital world, web scraping can often become a daunting task, laden with challenges such as rotating proxies, headless browsers, anti-bot protections, and rate limits. Additionally, continuous maintenance is required due to frequent website changes. CrawlKit streamlines the data extraction process by allowing users to simply send a request; it efficiently manages proxy rotation, browser rendering, retries, and block handling. This enables teams to focus on utilizing the data rather than getting bogged down by data collection intricacies. With a unified and consistent interface, CrawlKit caters to a diverse array of data extraction needs, including raw page content, search results, visual snapshots, and even professional data from platforms like LinkedIn. This makes it an essential tool for anyone in need of robust web data solutions.

Features of Crawlkit

One API for Multiple Data Sources

CrawlKit provides a single API that allows users to extract structured data from various websites, social platforms, and app stores with just one request. This unified approach simplifies the data collection process, eliminating the need for multiple tools and disparate systems.

Seamless Integration with Popular Platforms

CrawlKit seamlessly integrates with popular services like AWS, Node.js, Puppeteer, and Google, among others. This ensures that users can easily incorporate it into their existing workflows, regardless of the technology stack they are using.

Complete and Clean Data Outputs

CrawlKit guarantees that all data extraction requests return complete and clean data. The platform waits for full page loads and validates responses to ensure users receive accurate and reliable data, reducing the risks associated with partial or broken outputs.

Flexible Credit-Based Pricing Model

CrawlKit employs a simple credit-based pricing structure that allows users to pay as they go. This model ensures transparency with no hidden fees or surprise charges, enabling users to scale their data extraction needs efficiently.

Use Cases of Crawlkit

CRM Enrichment

CrawlKit can automatically enrich Customer Relationship Management (CRM) systems with LinkedIn profile data. Teams can pull job titles, company information, and contact details for each lead, enhancing their sales and marketing efforts.

Competitor Analysis

Data teams can utilize CrawlKit to perform in-depth competitor analysis by extracting metrics from social media platforms like Instagram. This includes tracking follower counts, engagement rates, and top-performing posts to gain insights into competitor strategies.

Market Research

Researchers can leverage CrawlKit to gather valuable market research data by extracting reviews and ratings from app stores. This information can be used to assess product performance and consumer sentiment, providing a competitive edge.

Data-Driven Decision Making

Businesses can use CrawlKit to gather actionable insights from various online sources, enabling data-driven decision-making. Whether it's tracking trends or monitoring sentiment, CrawlKit provides the structured data necessary for informed strategies.

Frequently Asked Questions

How does CrawlKit handle website changes?

CrawlKit is designed to adapt to changes in website structures automatically, reducing the need for users to constantly update their scraping scripts or tools, thus ensuring continuous access to reliable data.

Is there a limit on the number of API calls?

CrawlKit operates on a flexible credit-based system with no rate limits. Users can make as many API calls as needed, and credits can be purchased in bulk for better pricing.

What programming languages can I use with CrawlKit?

CrawlKit is a simple HTTP API that can be accessed from any programming language or platform, including popular ones like Node.js, Python, and Ruby, among others.

Can I request a custom API integration?

Yes, CrawlKit allows users to request custom API integrations for specific data sources that are not already available. The team is committed to building solutions that meet user needs effectively.

Explore more in this category:

Similar to Crawlkit

Subiq simplifies SaaS subscription management for small teams, helping you track tools, control costs, and avoid unexpected charges.

WhatTime.dev provides real-time global time zone conversion, meeting planning, and local time details for teams and travelers worldwide.

GhostlyX is a privacy-first web analytics platform that delivers actionable insights without compromising user privacy or using cookies.

Backed by peer-reviewed research, this app quantifies your daily microplastic intake from food, drink, and air to help you minimize exposure.

Webleadr instantly finds and contacts businesses without websites, helping you effortlessly generate web design leads from anywhere.

TubeAnalytics uses AI and official YouTube data to help creators predict video performance and optimize revenue growth.

Metric Nexus simplifies marketing data by unifying insights from multiple platforms and enabling natural language queries with Claude.

TrafficClaw transforms your SEO and analytics data into actionable insights through natural language conversations for smarter growth.