Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right product.

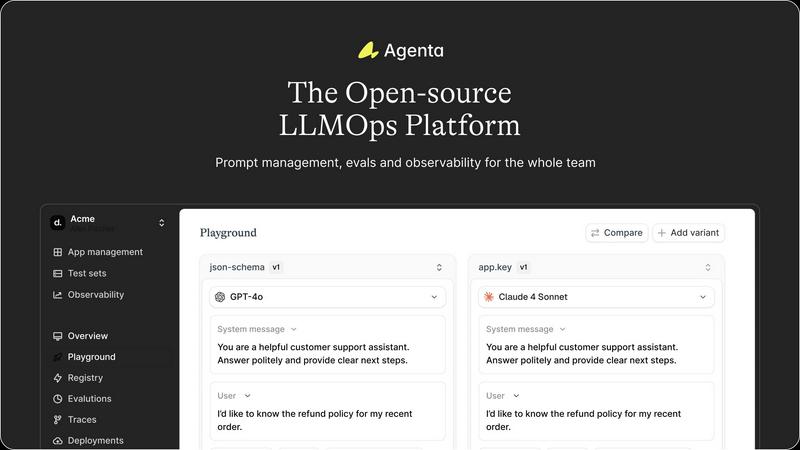

Agenta is an open-source LLMOps platform that streamlines collaboration for building and managing reliable LLM.

Last updated: March 1, 2026

qtrl.ai

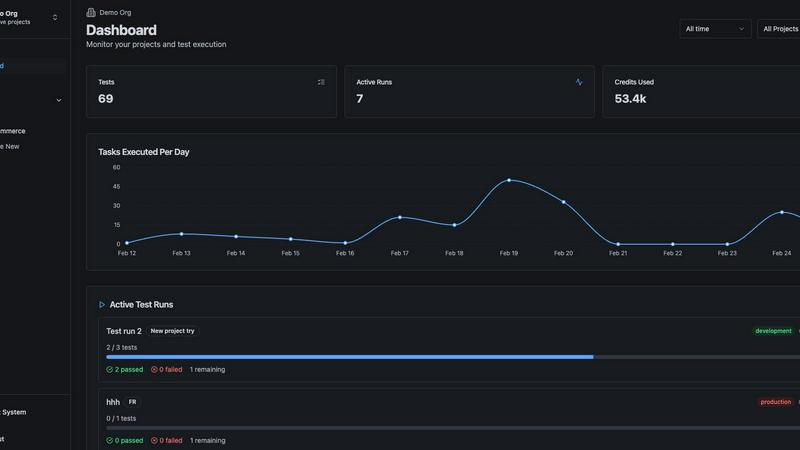

qtrl.ai empowers QA teams to scale testing with AI agents while maintaining full control and governance throughout.

Last updated: March 4, 2026

Visual Comparison

Agenta

qtrl.ai

Feature Comparison

Agenta

Centralized Prompt Management

Agenta provides a unified platform for storing and managing prompts, evaluations, and traces. This centralization allows teams to easily access and collaborate on prompts without the confusion of disparate tools, ensuring a more organized workflow.

Automated Evaluation Processes

With Agenta, teams can implement automated evaluation processes that replace guesswork with systematic experimentation. Users can create experiments, track results, and validate changes, allowing for evidence-based decision-making in LLM development.

Comprehensive Observability

Agenta offers robust observability features that allow teams to trace requests and pinpoint failure points in production systems. This functionality is critical for debugging and helps maintain high performance by providing insights into how models behave in real-world scenarios.

Collaborative Development Environment

Agenta fosters collaboration among product managers, developers, and domain experts. Its intuitive UI enables non-technical team members to participate in prompt editing and evaluation processes, bridging the gap between technical and non-technical stakeholders.

qtrl.ai

Autonomous QA Agents

qtrl.ai features autonomous QA agents that can execute instructions on demand or continuously across multiple environments. These agents operate within predefined rules, ensuring compliance while performing real browser executions instead of simulations. This capability allows teams to efficiently scale their testing efforts without losing oversight.

Enterprise-Grade Test Management

With a centralized system for managing test cases, plans, and runs, qtrl.ai provides comprehensive traceability and audit trails. This feature supports both manual and automated workflows, making it particularly well-suited for enterprises that require stringent compliance and governance in their QA processes.

Progressive Automation

Starting with human-written instructions, qtrl.ai allows teams to gradually incorporate AI-generated tests. As teams connect requirements, qtrl.ai automatically generates and executes tests, all of which remain fully reviewable. This progressive approach enables teams to scale their automation intelligently and securely.

Adaptive Memory

qtrl.ai employs adaptive memory to build a living knowledge base of the application, learning from exploration, test execution, and reported issues. This feature powers smarter, context-aware test generation that becomes increasingly effective with each interaction, thereby enhancing the overall quality assurance process.

Use Cases

Agenta

Rapid Prototype Development

Agenta can significantly accelerate the development of prototypes for LLM applications. By providing a structured environment for prompt experimentation and evaluation, teams can quickly iterate and refine their models based on real-time feedback.

Cross-Functional Team Collaboration

With Agenta, cross-functional teams can collaborate more effectively. Product managers, developers, and domain experts can work together in a single workflow, enhancing communication and reducing the chances of misalignment throughout the development process.

Systematic Error Debugging

When issues arise in production, Agenta's observability tools allow teams to trace requests and identify the root causes of errors. This capability transforms debugging from guesswork into a systematic process, improving the reliability of LLM applications.

Evidence-Based Model Evaluation

Agenta enables teams to replace subjective assessments with evidence-based evaluations of model performance. By integrating feedback from domain experts and running systematic experiments, teams can make informed decisions about model adjustments and improvements.

qtrl.ai

Product-Led Engineering Teams

Product-led engineering teams can utilize qtrl.ai to manage their testing processes efficiently. By leveraging both manual and automated testing capabilities, these teams can ensure rapid product development cycles without sacrificing quality.

QA Teams Scaling Beyond Manual Testing

For QA teams transitioning from manual testing to automation, qtrl.ai offers a seamless path forward. Teams can start with basic automation and gradually adopt more advanced features, ensuring a controlled and manageable scaling process.

Companies Modernizing Legacy QA Workflows

Organizations looking to modernize outdated QA workflows can benefit greatly from qtrl.ai. The platform integrates easily with existing tools and supports CI/CD pipelines, making it an ideal choice for companies aiming to enhance efficiency while maintaining quality standards.

Enterprises Requiring Governance and Traceability

Enterprises that need to adhere to strict governance and audit requirements can rely on qtrl.ai's comprehensive traceability and audit trails. This ensures that all test activities are documented, providing the necessary transparency and accountability for compliance purposes.

Overview

About Agenta

Agenta is an open-source LLMOps platform designed to empower AI development teams by providing the necessary infrastructure to build, evaluate, and deploy reliable Large Language Model (LLM) applications. The platform directly addresses critical challenges in modern AI development, such as the unpredictability of LLMs and the lack of structured, collaborative processes. These challenges often result in disorganized workflows, with prompts scattered across various tools like Slack, Google Sheets, and emails, leading to siloed teams and unvalidated deployments. Agenta acts as a centralized hub for developers, product managers, and subject matter experts, facilitating prompt experimentation, systematic evaluations, and production debugging using real data. Its primary value proposition is transforming chaotic workflows into evidence-based, repeatable LLMOps best practices. By integrating prompt management, automated evaluation, and comprehensive observability, Agenta enables teams to iterate rapidly, validate changes effectively, and maintain visibility into system performance, significantly reducing risks and time-to-production for LLM-driven features.

About qtrl.ai

qtrl.ai is an innovative quality assurance (QA) platform meticulously crafted to aid software teams in scaling their QA efforts while maintaining control and governance. By integrating enterprise-grade test management with robust AI automation, qtrl.ai serves as a centralized hub where teams can efficiently organize test cases, plan test runs, and trace requirements to coverage. Its real-time dashboards provide critical visibility into test outcomes, highlighting what has been tested, what is passing, and identifying potential risks, which is essential for engineering leads and QA managers.

What distinguishes qtrl.ai is its progressive AI layer, which introduces intelligent automation in a phased manner. Teams can begin with manual test management and gradually transition to leveraging built-in autonomous agents. These agents can create UI tests from plain English descriptions, adapt to application changes, and execute tests across various browsers and environments at scale. This adaptability makes qtrl.ai ideal for product-led engineering teams, QA groups seeking to move beyond manual testing, organizations modernizing outdated workflows, and enterprises that prioritize compliance and audit trails. Ultimately, qtrl.ai aims to reconcile the slow nature of manual testing with the intricate complexities of traditional automation, providing a reliable pathway to accelerated and more intelligent quality assurance processes.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps, or Large Language Model Operations, refers to the practices and frameworks involved in managing the lifecycle of LLM applications, including their development, evaluation, deployment, and monitoring.

How does Agenta improve collaboration among teams?

Agenta improves collaboration by providing a centralized platform where product managers, developers, and domain experts can work together. This eliminates silos and allows for transparent communication and shared access to prompts and evaluations.

Can Agenta integrate with existing tools and frameworks?

Yes, Agenta is designed to integrate seamlessly with various frameworks and tools, including LangChain and OpenAI. This flexibility allows teams to leverage their existing tech stack without vendor lock-in.

Is Agenta suitable for teams new to LLM development?

Absolutely. Agenta is designed to support teams at all levels of LLM maturity. Its structured processes and user-friendly interface make it an excellent choice for both newcomers and experienced teams looking to optimize their workflows.

qtrl.ai FAQ

What makes qtrl.ai different from traditional QA platforms?

qtrl.ai stands out by integrating AI automation with robust test management in a progressive manner. This allows teams to maintain control while gradually adopting automated testing, unlike traditional platforms that often require a complete shift to automation.

Can teams start with manual testing on qtrl.ai?

Yes, qtrl.ai is designed to accommodate teams at various stages of their testing journey. Teams can begin with manual test management and introduce automation as they become more comfortable, making the transition smooth and manageable.

How does qtrl.ai ensure compliance and traceability?

qtrl.ai provides comprehensive traceability and audit trails for all test management activities. This feature supports enterprises in maintaining compliance with regulatory standards by documenting every step of the QA process.

Is qtrl.ai suitable for small teams as well as large enterprises?

Absolutely. qtrl.ai is flexible and scalable, making it suitable for small teams looking to improve their QA processes as well as large enterprises that require stringent governance and automation capabilities.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed specifically for AI development teams to build, evaluate, and manage reliable large language model applications. It serves as a centralized hub that addresses common challenges in modern AI workflows, such as the unpredictability of LLMs and fragmented collaboration among teams. Users often seek alternatives to Agenta for various reasons, including pricing considerations, specific feature requirements, or compatibility with different technical environments. When choosing an alternative, it's important to assess the platform's capabilities in prompt management, evaluation automation, and observability to ensure it meets your team's unique needs and enhances productivity.

qtrl.ai Alternatives

qtrl.ai is a cutting-edge platform in the quality assurance (QA) category, designed to empower software teams by enhancing their testing capabilities through AI-driven automation while maintaining control and governance. It serves as a centralized hub for organizing test cases, planning test runs, and tracking quality metrics, ensuring teams have clear visibility into their testing processes. Users often seek alternatives to qtrl.ai for various reasons, including pricing structures, feature sets, and specific platform requirements. When evaluating other options, it is essential to consider factors such as ease of integration with existing workflows, the level of automation offered, and the ability to manage compliance and auditing effectively. Finding a solution that fits seamlessly with team dynamics while offering robust support and scalability is crucial for long-term success.