Giga AI vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Giga AI enhances your coding process by eliminating errors and ensuring your AI builds precisely to your project goals.

Last updated: February 28, 2026

OpenMark AI benchmarks over 100 LLMs on your specific tasks, delivering rapid insights into cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

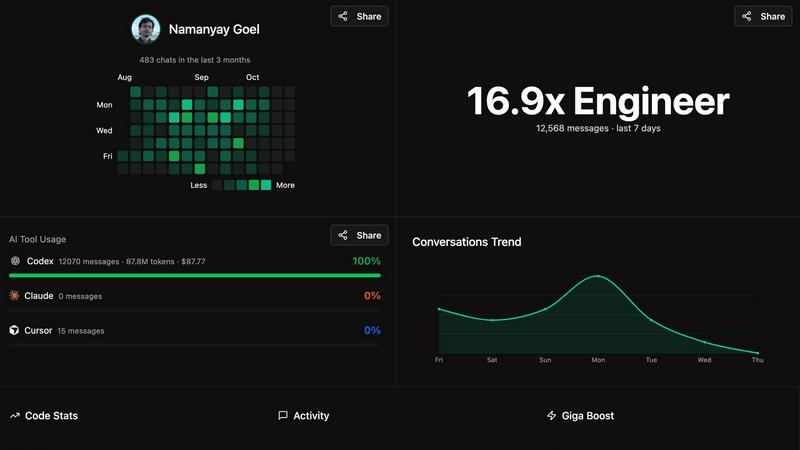

Giga AI

OpenMark AI

Feature Comparison

Giga AI

Contextual Rule Generation

Giga AI automatically analyzes your codebase to generate contextual rules that guide AI coding assistants in generating relevant code. This ensures that the AI remains aligned with your existing architecture from the very first prompt.

Enhanced AI Understanding

By providing AI tools with a project-specific understanding, Giga eliminates common issues such as hallucinations or misunderstandings of the codebase. This leads to higher quality code generation that meets your specific needs without unnecessary errors.

Seamless Integration with Popular IDEs

Giga AI can be easily installed as an extension in popular IDEs like Cursor, VS Code, and Claude Code. This quick setup allows developers to start benefiting from its features almost immediately, without major disruptions to their workflow.

Time-Saving Automation

With Giga AI, builders can save significant amounts of time, averaging up to 20 hours per month, by reducing the frequency of mistakes and hallucinations in AI-generated code. This allows developers to focus more on building their projects rather than debugging.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI features an intuitive task configuration interface that allows users to describe their benchmarking tasks in simple language. This accessibility ensures that even those without extensive technical knowledge can effectively set up their tests and receive meaningful results.

Comprehensive Model Comparison

The platform supports benchmarking against over 100 different AI models, enabling users to gain a comprehensive understanding of which models perform best for their specific tasks. This wide-ranging comparison helps teams make informed decisions based on real-world performance metrics.

Real-Time API Results

OpenMark AI provides side-by-side results of real API calls, ensuring that users receive accurate data reflective of actual performance. This real-time feedback is crucial for developers looking to understand how different models behave under similar conditions.

Cost Efficiency Analysis

One of the standout features of OpenMark AI is its ability to analyze the cost efficiency of different models. Users can see not only the quality of outputs but also how the costs compare against each model, enabling them to make financially sound decisions when selecting an AI solution.

Use Cases

Giga AI

Solo Founders Building MVPs

Giga AI is ideal for non-technical entrepreneurs seeking to launch their MVPs quickly and efficiently. By reducing the time spent debugging and correcting AI errors, users can focus more on the creative aspects of their project.

Teams Enhancing Productivity

For seasoned engineers and team leads, Giga AI serves as a powerful tool to boost team productivity. By providing consistent and reliable code generation, teams can accelerate their development cycles and improve overall code quality.

Freelance Developers Managing Client Projects

Freelancers can leverage Giga AI to enhance their coding efficiency, especially when working on complex client projects under tight deadlines. The platform helps reduce the time spent on bug-fixing, allowing for faster delivery.

AI Consultants Streamlining Workflows

Consultants in the AI space can utilize Giga AI to streamline their workflows by ensuring that the AI tools they use generate relevant and error-free code. This leads to better client outcomes and improved satisfaction.

OpenMark AI

Model Selection for AI Features

Developers can utilize OpenMark AI to select the most appropriate model for their AI-driven features by benchmarking performance on specific tasks. This ensures that the chosen model aligns with both performance goals and budget constraints.

Pre-Deployment Validation

Product teams can validate their model choices before deployment by testing outputs for consistency and quality. This capability reduces the risk associated with deploying a less effective model, ensuring a smoother transition from development to production.

Cost-Benefit Analysis

Businesses seeking to optimize their AI spending can leverage OpenMark AI to perform a detailed cost-benefit analysis. By comparing the actual costs of API calls with the outputs generated, organizations can identify the best value options.

Research and Development

Researchers can use OpenMark AI to experiment with various models for academic or product development purposes. The tool allows for thorough testing of hypotheses regarding model performance across different tasks and environments.

Overview

About Giga AI

Giga AI is a cutting-edge context engineering platform specifically crafted to address the common challenges associated with AI errors in software development. By serving as a "project brain" for AI coding assistants, Giga provides a deep and persistent understanding of a user's unique codebase, objectives, and preferences. This innovative tool is designed for a broad spectrum of builders, including solo founders and non-technical entrepreneurs launching their minimum viable products (MVPs), as well as experienced engineers and team leads aiming to enhance productivity and code quality. The primary value proposition of Giga AI lies in its ability to significantly reduce the time wasted on debugging AI hallucinations, re-prompting, and rectifying context-related mistakes, leading to a reported 72% reduction in bugs. By seamlessly integrating as an extension within popular integrated development environments (IDEs) like Cursor, VS Code, and Claude Code, Giga automatically analyzes projects to generate contextual rules that ensure coherent and consistent code generation. This transforms AI from a frustrating, error-prone assistant into a reliable partner, fundamentally improving the software development workflow.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, facilitating the benchmarking of over 100 AI models within a single session. By running identical prompts across multiple models, users can effectively compare key metrics such as cost per request, latency, scored quality, and stability, providing insights into the variance of model outputs rather than relying on potentially misleading singular results. This is particularly valuable for developers and product teams who need to evaluate or validate AI models before deploying features that incorporate artificial intelligence.

OpenMark AI eliminates the complexity of managing multiple API keys by using a credit system for hosted benchmarking, making it easier to conduct comprehensive comparisons without the need for extensive configuration. Users benefit from real-time results based on actual API calls rather than pre-cached marketing data, making the tool essential for those who prioritize cost efficiency and consistent performance over simply choosing the lowest-priced token option. The platform supports a wide array of models and is designed to assist teams in pre-deployment decisions, ensuring they select the most suitable model for their specific workflow while maintaining budget considerations. OpenMark AI offers both free and paid plans, providing flexibility according to user needs.

Frequently Asked Questions

Giga AI FAQ

How does Giga AI reduce debugging time?

Giga AI minimizes debugging time by generating contextual rules that guide AI coding assistants, thus reducing the occurrence of hallucinations and errors in code generation.

Can Giga AI be used with multiple IDEs?

Yes, Giga AI integrates seamlessly with popular IDEs such as Cursor, VS Code, and Claude Code, allowing users to install it quickly and benefit from its features across different environments.

What is the average time saved by using Giga AI?

Users report an average time savings of approximately 20 hours per month when using Giga AI, thanks to reduced mistakes and improved code quality.

Is there a trial period available for Giga AI?

Yes, Giga AI offers a free trial period, allowing users to explore its features and benefits risk-free before committing to a subscription.

OpenMark AI FAQ

What types of models can I benchmark with OpenMark AI?

OpenMark AI supports a wide variety of models from leading AI providers, including OpenAI, Anthropic, and Google, enabling users to benchmark over 100 different LLMs.

Do I need to manage multiple API keys to use OpenMark AI?

No, OpenMark AI streamlines the process by utilizing a credit system for hosted benchmarking, which means you do not need to configure separate API keys for each model comparison.

Is OpenMark AI suitable for non-technical users?

Yes, the user-friendly interface allows individuals without extensive technical knowledge to easily describe tasks and benchmark models, making it accessible to a broader audience.

What kind of results can I expect from OpenMark AI?

Users can expect detailed results that include cost per request, latency, scored quality, and stability metrics, allowing for a comprehensive evaluation of model performance based on real API calls.

Alternatives

Giga AI Alternatives

Giga AI is an advanced context engineering platform designed to streamline software development by minimizing coding errors. It functions as a "project brain" for AI coding assistants, ensuring that they maintain a deep understanding of a user's unique codebase and preferences. This capability significantly enhances productivity, particularly for developers seeking to improve code quality and reduce debugging time. Users often search for alternatives to Giga AI due to various reasons including pricing, specific feature sets, or compatibility with their preferred development environments. When evaluating alternatives, it is crucial to consider factors such as the platform's ability to integrate with existing tools, the robustness of its error-reduction features, and the overall user experience and support provided. A comprehensive understanding of these aspects will help developers choose the best solution tailored to their needs.

OpenMark AI Alternatives

OpenMark AI is a powerful web application designed for benchmarking over 100 large language models (LLMs) on various tasks, focusing on key metrics such as cost, speed, quality, and stability. This tool is particularly beneficial for developers and product teams seeking to make informed decisions about AI model selection before deploying features. Users often search for alternatives to OpenMark AI due to factors like pricing, specific feature sets, or platform compatibility that may better suit their unique project needs. When considering alternatives, it is essential to evaluate the specific functionalities offered, such as user interface design, supported models, and benchmarking capabilities. Additionally, users should assess the pricing structure, including free and paid plans, and the degree of support provided for integration and usage. Ultimately, finding the right tool hinges on identifying a solution that aligns with both project requirements and budget constraints.