diffray vs OpenMark AI

Side-by-side comparison to help you choose the right product.

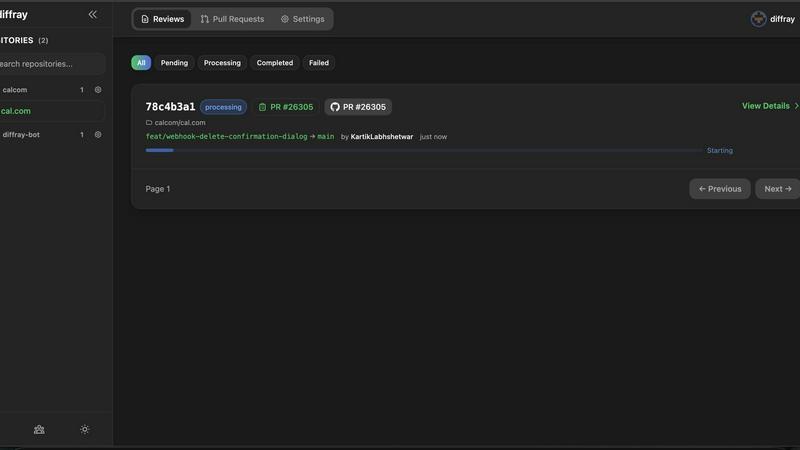

diffray

Diffray's multi-agent AI catches real bugs with 87% fewer false positives than single-agent tools.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

diffray

OpenMark AI

Overview

About diffray

diffray is a sophisticated AI-powered code review assistant engineered to transform the efficiency and effectiveness of software development workflows. It is designed for development teams and engineering organizations seeking to enhance code quality, accelerate release cycles, and reduce developer burnout associated with manual code review processes. At its core, diffray utilizes a revolutionary multi-agent architecture, deploying over 30 specialized AI agents, each an expert in a distinct domain such as security vulnerabilities, performance bottlenecks, bug patterns, language-specific best practices, and SEO considerations for web code. This targeted, ensemble approach allows diffray to conduct a deeply contextual analysis of pull requests (PRs), understanding the proposed changes in relation to the entire codebase rather than in isolation. The result is a dramatic improvement in diagnostic accuracy: diffray reduces false positives by 87% and triples the detection of genuine, critical issues compared to generic, single-model AI tools. By delivering precise, actionable insights directly into the developer's workflow, diffray empowers teams to slash average PR review time from 45 minutes to just 12 minutes per week, according to user reports. Its primary value proposition lies in elevating code quality through intelligent, context-aware automation, making it an indispensable asset for modern software engineering teams committed to excellence and operational efficiency.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.