Claude Fast vs OpenMark AI

Side-by-side comparison to help you choose the right product.

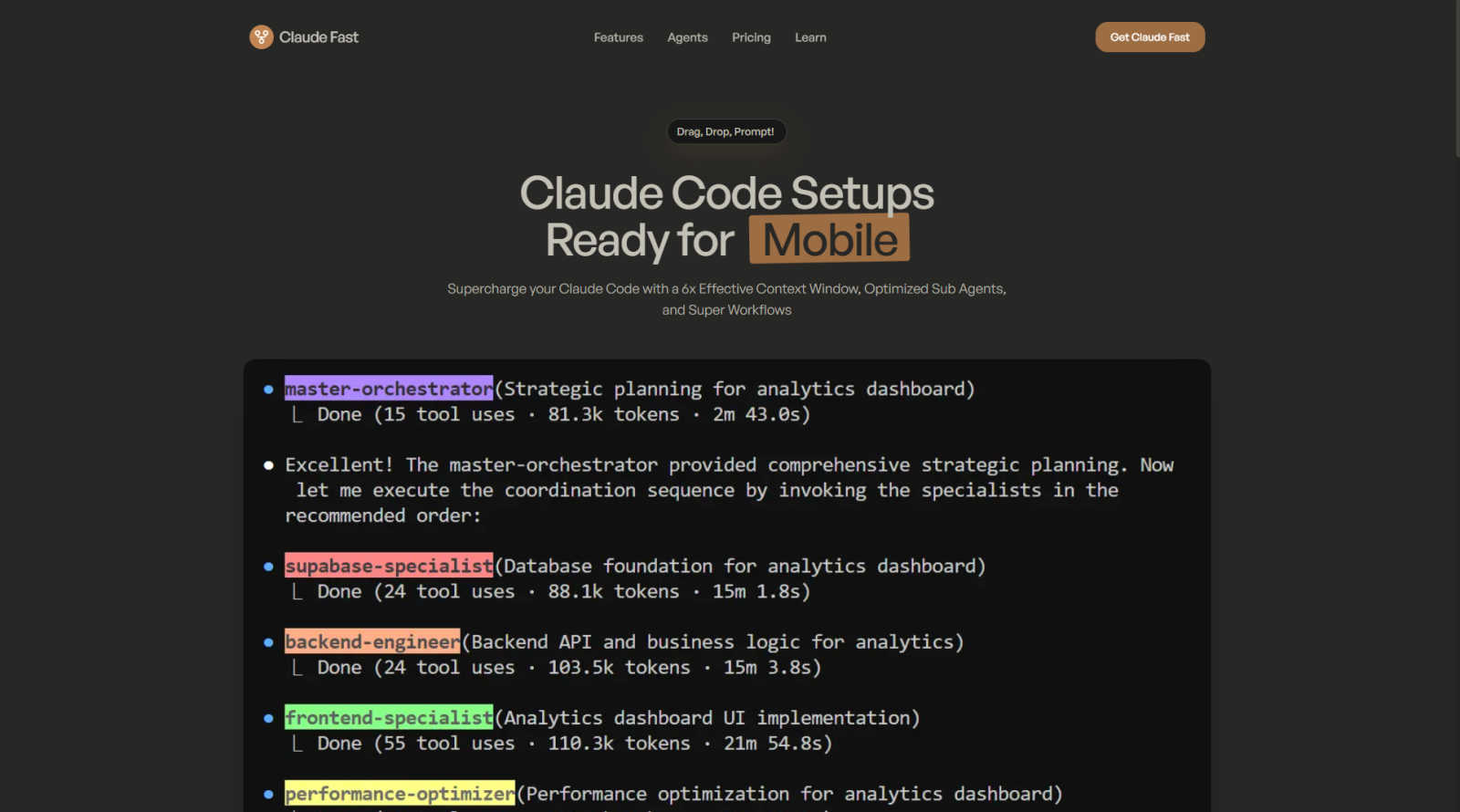

Claude Fast

Claude Fast enhances Claude Code with intelligent agents, expanded context, and automated workflows for superior coding.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs on your specific tasks, delivering rapid insights into cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

Claude Fast

OpenMark AI

Feature Comparison

Claude Fast

Zero Forgotten Skills

Claude Fast incorporates a unique Skill Activation Hook that automatically appends skill recommendations to prompts before they reach Claude. This ensures 100% adherence to skill utilization, allowing Claude Code to load the right skills precisely when needed, thereby optimizing overall efficiency and effectiveness during development.

Uninterrupted Flow

The Permission Hook feature enables LLM-powered auto-permission approvals with a caching mechanism for speed. This innovative approach strikes the ideal balance between speed and safety, eliminating the risks associated with skipped flags while maintaining an efficient workflow throughout the development process.

Context Min-maxing

Claude Fast employs an advanced context management strategy where the central Claude conserves its context by delegating tasks frequently. Sub-agents are guided to maximize their temporary context windows, ensuring that the entire system operates efficiently and effectively without losing critical information during complex tasks.

Native Task Sync

This feature provides bidirectional synchronization with Claude's task management system. Plans are seamlessly transformed into executable tasks that are tracked through documentation, ensuring that every aspect of the project is organized and that developers can maintain visibility over their work.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI features an intuitive task configuration interface that allows users to describe their benchmarking tasks in simple language. This accessibility ensures that even those without extensive technical knowledge can effectively set up their tests and receive meaningful results.

Comprehensive Model Comparison

The platform supports benchmarking against over 100 different AI models, enabling users to gain a comprehensive understanding of which models perform best for their specific tasks. This wide-ranging comparison helps teams make informed decisions based on real-world performance metrics.

Real-Time API Results

OpenMark AI provides side-by-side results of real API calls, ensuring that users receive accurate data reflective of actual performance. This real-time feedback is crucial for developers looking to understand how different models behave under similar conditions.

Cost Efficiency Analysis

One of the standout features of OpenMark AI is its ability to analyze the cost efficiency of different models. Users can see not only the quality of outputs but also how the costs compare against each model, enabling them to make financially sound decisions when selecting an AI solution.

Use Cases

Claude Fast

Rapid MVP Development

Startups and entrepreneurs can leverage Claude Fast to accelerate the development of Minimum Viable Products (MVPs). By utilizing the specialized agents to handle various aspects of development, users can go from concept to live product in a fraction of the time, significantly reducing time-to-market.

Efficient Team Collaboration

Teams can utilize Claude Fast to streamline collaboration among developers, designers, and project managers. The system's intelligent routing and task synchronization allow for efficient delegation of responsibilities, making it easier for teams to work in parallel without stepping on each other's toes.

Comprehensive Code Deployment

Claude Fast simplifies the code deployment process through its Infra Master Skill, which enables users to SSH into and secure their VPS seamlessly. This functionality allows developers to focus on coding without the usual headaches associated with deployment logistics.

Enhanced Learning and Improvement

As users work with Claude Fast, the Self-Improvement Loop feature promotes continuous learning by capturing insights and optimizing skills automatically. This evolving system ensures that developers benefit from a constantly improving tool that adapts to their changing needs.

OpenMark AI

Model Selection for AI Features

Developers can utilize OpenMark AI to select the most appropriate model for their AI-driven features by benchmarking performance on specific tasks. This ensures that the chosen model aligns with both performance goals and budget constraints.

Pre-Deployment Validation

Product teams can validate their model choices before deployment by testing outputs for consistency and quality. This capability reduces the risk associated with deploying a less effective model, ensuring a smoother transition from development to production.

Cost-Benefit Analysis

Businesses seeking to optimize their AI spending can leverage OpenMark AI to perform a detailed cost-benefit analysis. By comparing the actual costs of API calls with the outputs generated, organizations can identify the best value options.

Research and Development

Researchers can use OpenMark AI to experiment with various models for academic or product development purposes. The tool allows for thorough testing of hypotheses regarding model performance across different tasks and environments.

Overview

About Claude Fast

Claude Fast is an innovative, pre-configured framework that converts Anthropic's Claude Code from a singular AI model into a cohesive, orchestrated development team. This system comprises 11 specialized AI agents, each designated with unique roles, which collaborate seamlessly to tackle complex, multi-step projects. One of its standout features is the native task management synchronization with Claude Code, resulting in an impressive 6x effective context window utilization, which allows for prolonged and coherent development sessions that can exceed 1 million tokens of effective context. This optimization is not merely about expanding memory; instead, it focuses on intelligent delegation and session management. Tailored for founders, solopreneurs, and developers, Claude Fast accelerates the process of shipping production-ready code for web, mobile, and backend applications without compromising quality or getting entangled in the intricacies of prompt engineering and agent coordination logistics. With its user-friendly "drag, drop, and prompt" interface, Claude Fast eliminates the extensive trial-and-error phase typically associated with establishing a robust multi-agent system, making it an indispensable tool for modern software development.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, facilitating the benchmarking of over 100 AI models within a single session. By running identical prompts across multiple models, users can effectively compare key metrics such as cost per request, latency, scored quality, and stability, providing insights into the variance of model outputs rather than relying on potentially misleading singular results. This is particularly valuable for developers and product teams who need to evaluate or validate AI models before deploying features that incorporate artificial intelligence.

OpenMark AI eliminates the complexity of managing multiple API keys by using a credit system for hosted benchmarking, making it easier to conduct comprehensive comparisons without the need for extensive configuration. Users benefit from real-time results based on actual API calls rather than pre-cached marketing data, making the tool essential for those who prioritize cost efficiency and consistent performance over simply choosing the lowest-priced token option. The platform supports a wide array of models and is designed to assist teams in pre-deployment decisions, ensuring they select the most suitable model for their specific workflow while maintaining budget considerations. OpenMark AI offers both free and paid plans, providing flexibility according to user needs.

Frequently Asked Questions

Claude Fast FAQ

How does Claude Fast improve context management?

Claude Fast enhances context management by employing a strategy that involves frequent task delegation and guiding sub-agents to maximize their temporary context windows. This allows for efficient information retention and utilization throughout development.

Can Claude Fast be used by solo developers?

Absolutely. Claude Fast is designed for founders, solopreneurs, and developers who need to ship production-ready code quickly and efficiently. Its user-friendly interface and specialized agents make it ideal for individual users.

What types of projects can benefit from Claude Fast?

Claude Fast is versatile and can be applied to a variety of projects, including web, mobile, and backend applications. Its framework is particularly beneficial for complex, multi-step projects that require coordinated efforts from multiple agents.

Is there ongoing support available for Claude Fast users?

Yes, Claude Fast offers priority email and Twitter support to users, ensuring that assistance is readily available. This continuous support is part of the value proposition, helping users navigate any challenges they may encounter.

OpenMark AI FAQ

What types of models can I benchmark with OpenMark AI?

OpenMark AI supports a wide variety of models from leading AI providers, including OpenAI, Anthropic, and Google, enabling users to benchmark over 100 different LLMs.

Do I need to manage multiple API keys to use OpenMark AI?

No, OpenMark AI streamlines the process by utilizing a credit system for hosted benchmarking, which means you do not need to configure separate API keys for each model comparison.

Is OpenMark AI suitable for non-technical users?

Yes, the user-friendly interface allows individuals without extensive technical knowledge to easily describe tasks and benchmark models, making it accessible to a broader audience.

What kind of results can I expect from OpenMark AI?

Users can expect detailed results that include cost per request, latency, scored quality, and stability metrics, allowing for a comprehensive evaluation of model performance based on real API calls.

Alternatives

Claude Fast Alternatives

Claude Fast is an innovative AI assistant that falls under the category of intelligent agents designed to enhance development workflows. By transforming Anthropic's Claude Code into a cohesive multi-agent system, it enables users to manage complex projects with improved efficiency and coherence. Users often seek alternatives to Claude Fast due to various reasons, such as pricing constraints, specific feature requirements, or compatibility with particular platforms. When exploring alternatives, it is important to consider factors like ease of use, scalability, and the ability to integrate seamlessly with existing tools and workflows. --- [{"question": "What is Claude Fast?", "answer": "Claude Fast is a pre-configured framework that enhances Claude Code by utilizing a system of specialized AI agents to efficiently manage complex multi-step development projects."},{"question": "Who is Claude Fast for?", "answer": "Claude Fast is designed for founders, solopreneurs, and developers who need to quickly produce high-quality code for web, mobile, and backend applications."},{"question": "Is Claude Fast free?", "answer": "The specific pricing details for Claude Fast are not provided, and users should check the official website for the latest information."},{"question": "What are the main features of Claude Fast?", "answer": "Main features of Claude Fast include skill activation hooks, context min-maxing, and intelligent routing for efficient task management."}]

OpenMark AI Alternatives

OpenMark AI is a powerful web application designed for benchmarking over 100 large language models (LLMs) on various tasks, focusing on key metrics such as cost, speed, quality, and stability. This tool is particularly beneficial for developers and product teams seeking to make informed decisions about AI model selection before deploying features. Users often search for alternatives to OpenMark AI due to factors like pricing, specific feature sets, or platform compatibility that may better suit their unique project needs. When considering alternatives, it is essential to evaluate the specific functionalities offered, such as user interface design, supported models, and benchmarking capabilities. Additionally, users should assess the pricing structure, including free and paid plans, and the degree of support provided for integration and usage. Ultimately, finding the right tool hinges on identifying a solution that aligns with both project requirements and budget constraints.